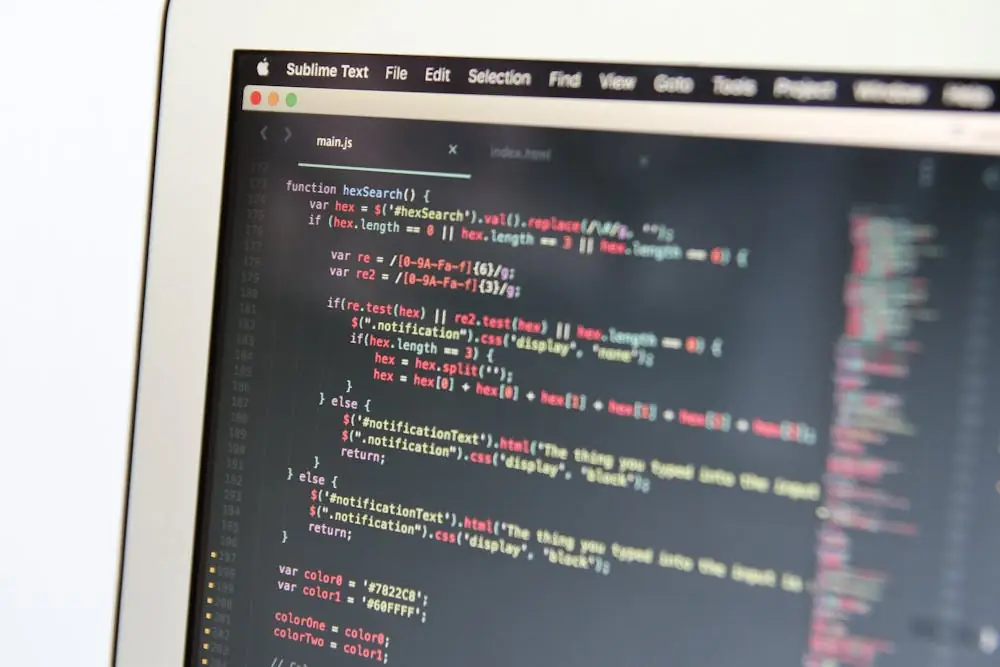

Your GPUs.

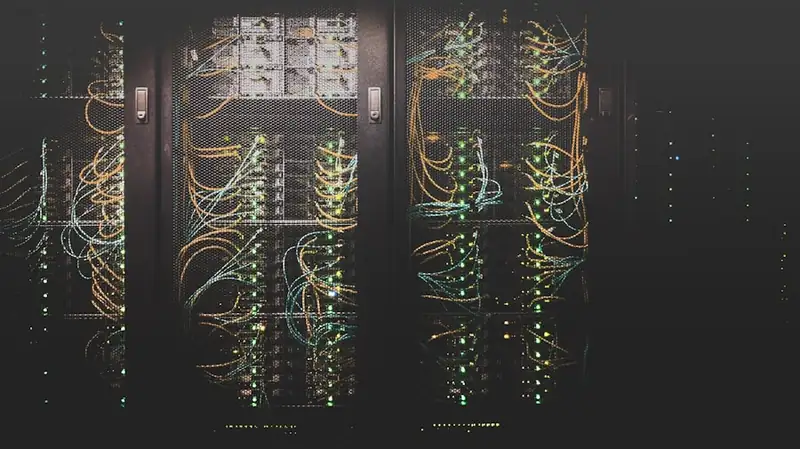

Inside the building.

Closer to where AI runs.

Centralized clouds put your inference 30 ms and a thousand miles away. ARO opens single-tenant GPU sites inside hotels, apartments, hospitals, and offices across the U.S. — built for AI inference, IoT, autonomous-systems compute, real-time CV, and every GPU-intensive workload that runs better next to its data. Enterprise hardware. Fiber backhaul. Multi-year reservations. Real human support.

The hyperscalers built for training. The world is shipping inference.

Inference belongs near the data.

You don't need a 500-megawatt campus in Loudoun County to run a 70B model on a video stream from a building in Tampa. You need 96 GB of VRAM in the basement, single-tenant, with 100 GbE out the back. That's what ARO ships.

Centralized GPU cloud

Your inference call leaves the building, crosses two states, lands in a multi-tenant pool, and waits its turn behind whoever booked first.

- 20–60 ms round trip before a token is generated

- Shared GPUs, shared neighbors, surprise queue depth

- Egress fees on the data you didn't want to move

- Quarterly capacity scrums for a customer your size

GPUs in the building, reserved for you

Your inference call hits a hub in the same metro, on hardware you reserved, with neighbors you don't have.

- Sub-10 ms tenant-to-hub target latency

- Single-tenant nodes — predictable performance, no waits

- Local ingest, local compute, less data to backhaul

- Multi-year reservations, real human on speed-dial

Pick a workload, pick a footprint, see what you'd get.

Indicative throughput on Blackwell-class hardware. Real numbers depend on model, quantization, sequence length, and tenancy. We size every reservation to your actual workload.

Tell us what you're running.

Built for inference at the edge.

Three things separate ARO from the centralized GPU clouds and the hyperscaler training clusters.

Distributed by design

Hubs sit inside hotels, apartment buildings, hospitals, and offices, co-located with the workloads they support — AI inference, IoT pipelines, autonomous-systems compute, real-time CV. No backhaul cost to a remote region.

Single-tenant by default

Dedicated nodes, not shared multi-tenant. Predictable performance, predictable costs, isolated security boundary, no surprise wait time.

Capacity, not waiting in line

We reserve up front. No bursting against neighbors. Reserve in 8-, 16-, 32-, 64-, 128-, or 256-GPU increments with multi-year terms.

How an ARO hub plugs into your operations.

Local data ingest, on-premise compute, low-latency outputs, without trucking your data to a remote hyperscaler.

Enterprise components, validated reference designs.

A condensed view of what ships at every site. Specs depend on configuration; the full data sheet lives on the hardware page.

What gets installed at every site.

Three building blocks: enterprise-grade compute, redundant fiber, and liquid-assisted cooling. Hardware shown is representative of the Dell-validated reference architecture we deploy.

Built for AI, IoT, autonomous systems, and the workloads that need to live at the edge.

AI inference is the lead workload today. The same hardware runs IoT pipelines, autonomous-systems compute, real-time computer vision, and any GPU-intensive workload that benefits from sitting close to where data is generated.

Production inference of 7B–70B-parameter models

A single Blackwell-class card runs 70B at FP4 with KV-cache headroom. Ideal for OEM, RAG, and agentic deployments where latency matters.

Medical imaging and clinical inference

Radiology, pathology, and clinical decision support with data residency, audit logging, and isolated tenant environments by design.

Sensor pipelines and property-resident vision

Hotel, retail, building-systems, and smart-city sensor data processed on-property. Lower bandwidth costs, lower latency, sensor data stays local.

Edge inference for autonomous systems

Vehicle and robotics fleets need GPU inference within milliseconds of the sensor. Distributed hubs put compute next to the operating environment, with single-tenant guarantees the safety case requires.

Manufacturing edge and connected operations

Predictive maintenance, defect detection, and process optimization on the factory floor. GPU-accelerated inference at the site, with data sovereignty over sensor and process telemetry.

Data-residency-sensitive enterprise inference

Single-tenant deployments kept inside specific regions, for financial, legal, and healthcare workloads where compliance posture matters more than burst capacity.

Built and run like enterprise infrastructure.

We own the hardware. We monitor it. We support it. Our hubs are designed for the operating standards a serious AI customer expects.

See our operations posture →- 24/7 monitoring & NOC oversightContinuous health checks, telemetry, alerting on every node.

- Dell-backed maintenance5-year warranty, ProSupport, on-site break-fix.

- Insured equipmentProperty & cyber liability coverage carried by ARO.

- Compliance roadmapSOC 2 Type I in flight, HIPAA + ISO 27001 sequenced.

Selected for what holds up in production.

Operators, not theorists.

Founder of Additional Revenue Opportunities ARO LLC. Three decades operating businesses at the intersection of communications, real-estate-resident infrastructure, and ancillary revenue. Leads ARO's site origination, capital strategy, and tenant relationships.

Senior sales and partnerships executive, 20+ years across hospitality, healthcare, retail, and multi-site enterprise. Marine Corps, Army, and State Department alumnus. Most recently led Samsung Electronics enterprise TV business development; previously helped scale Ruckus Wireless to 4,000+ hotel deployments and influenced more than $300M in partner-driven pipeline. Drives ARO's GPU-as-a-Service tenant pipeline.

Talk to our capacity team.

Sizing GPU capacity for the next 12–36 months? We'll walk you through what's coming online and where. The full reservation form lives on the contact page.